"The challenge wasn't the person using sign language. The real barrier was that the systems around us weren't designed to understand them."

"The idea behind Allytriz began with a moment that made me look at communication differently.

I was visiting a friend’s home when I met someone in the family who communicated through sign language. Everyone around him could interact with him easily, but I couldn’t. For the first time, I experienced what a communication barrier actually feels like.

What struck me was that the challenge wasn’t the person using sign language. The real barrier was that the systems around us weren’t designed to understand them.

That moment stayed with me.

Growing up, I had always been interested in building things and experimenting with technology to solve needfull problems. Over time, that curiosity turned into a question: what if technology could help translate human gestures into communication that anyone could understand?

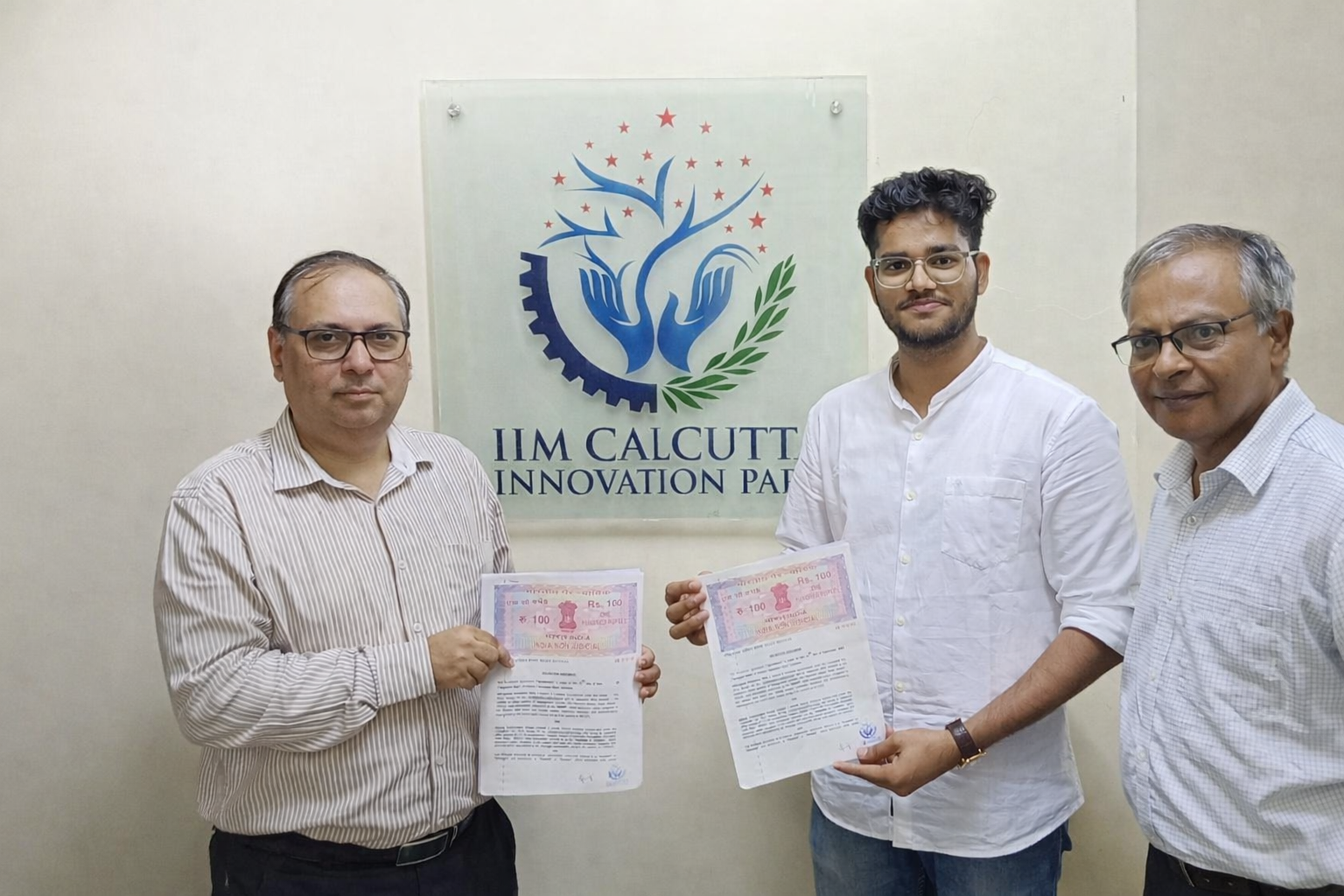

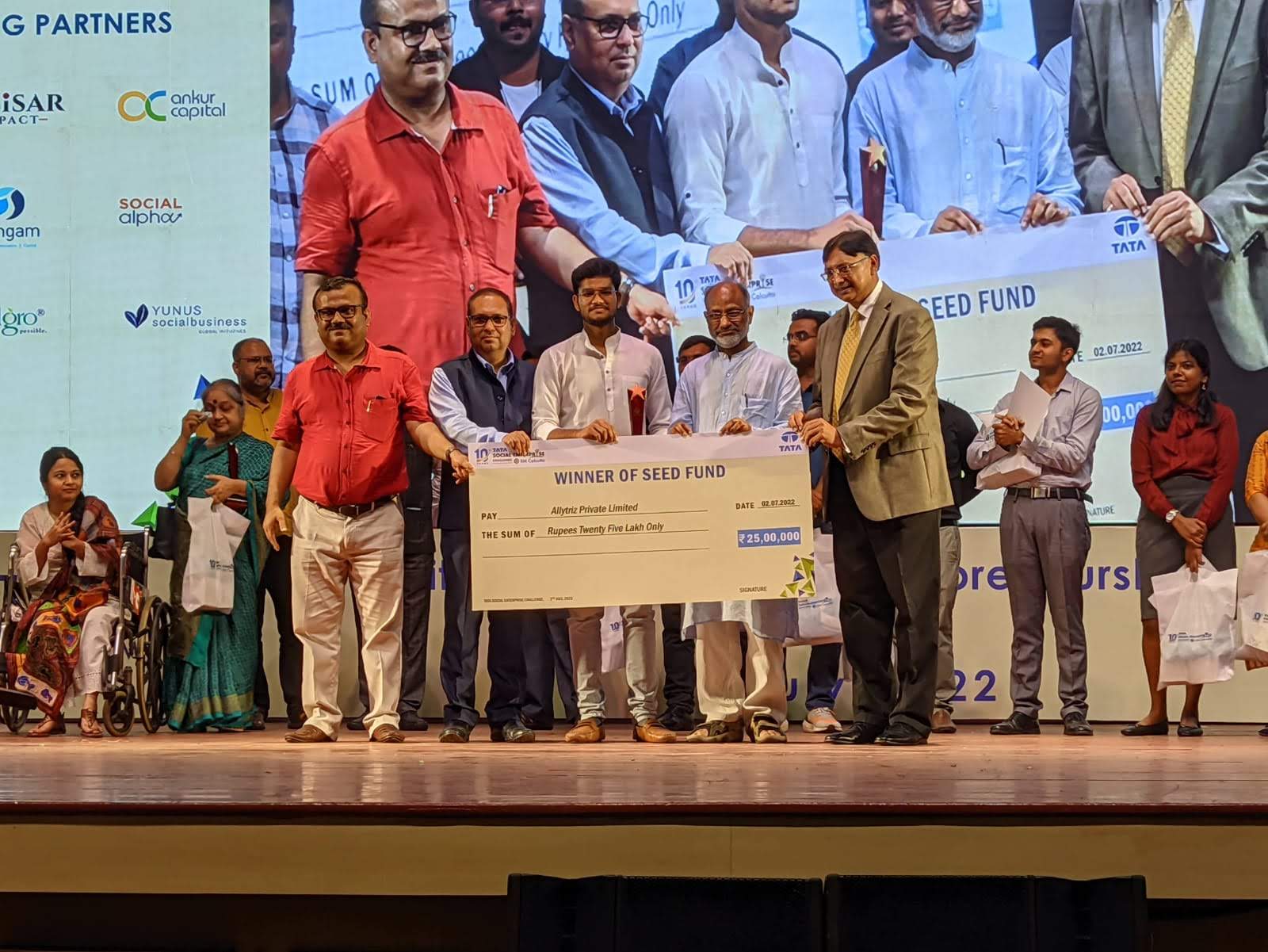

That question eventually led to the creation of Allytriz.

Today, we are developing gesture-based communication technologies that enable more inclusive interaction, starting with assistive communication systems and expanding toward broader human–machine interfaces.

Our goal is simple: to build technology that allows people and systems to understand human intent more naturally.

Because communication should never depend on who understands which language."